The Hypocrisy of ChatGPT

As a regular and enthusiastic user of ChatGPT I find it useful for finding information, summarising documents, analysing spreadsheets and performing complex calculations. I also, increasingly, use it to generate images to accompany articles for my Substack and other publications.

It is in the generation of images that I find fault with ChatGPT, not so much for what it cannot do, but for what it can do. The attitude of ChatGPT – if a chatbot can have an attitude – verges on hypocrisy.

I have on several occasions run into problems when asking ChatGPT to generate an image of a person who may typically represent their race or nationality. Thus, if I ask ChatGPT to “generate an image of a typical African man” or a “typical Chinese man” I run into problems. The response is, almost invariably, “I can’t create or display images that depict what a ‘typical’ person of a particular nationality or ethnicity looks like, since that would rely on stereotypes.”

In the case of African men, I am offered the opportunity to ask for a typical Bushman of the Kalahari Desert or someone in the national dress of a particular country. In the case of China, I am offered people in dress typical of a Chinese dynasty or class. Fair enough, I suppose, we must not stereotype races or nationalities and ChatGPT probably only reflects contemporary cultural mores. Except…

To test ChatGPT’s commitment to avoiding national, racial or cultural stereotypes I asked it to “generate an image of a typical Scotsman”. And hoots man the noo, see you Jimmy, an image of a man in Highland dress instantly appeared. Wondering if this was an anomaly, I repeated the question to be provided, instantly, with another image of a man in Highland dress. No stereotypes there!

Wondering if I could repeat the exercise for other Gaels I asked for images of a “typical Welshman” and a “typical Irishman” only to be met with the ChatGPT line about stereotypes. While I wasn’t expecting leprechauns or men in flagrante with sheep, I thought that, at least, ChatGPT might have had a go at a man in an Irish kilt (they do exist) or a singing coal miner.

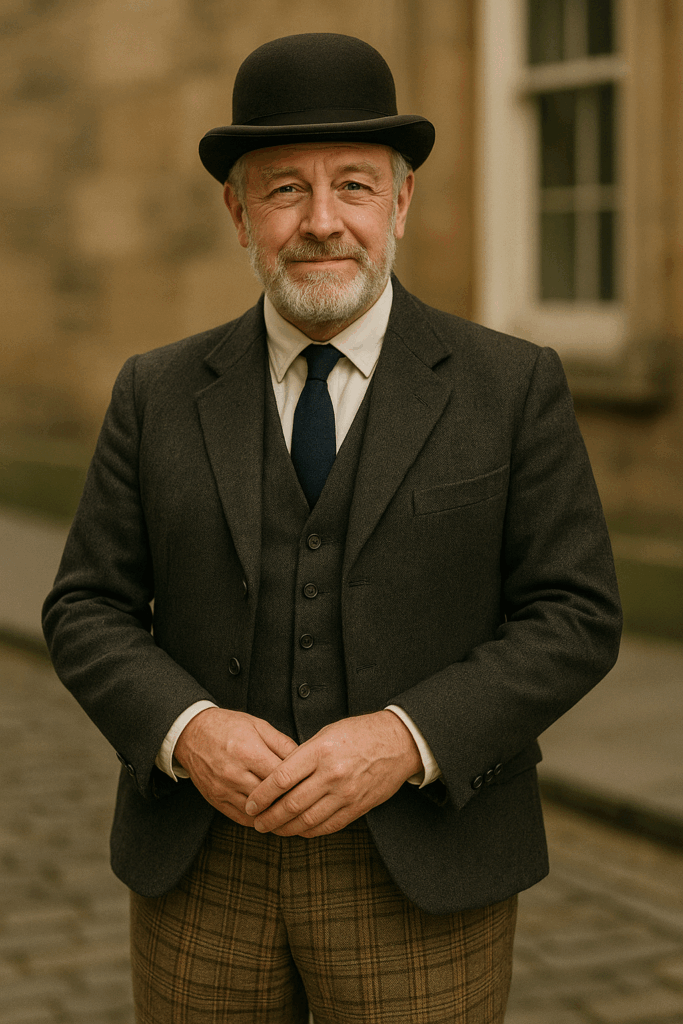

I assumed, given the no-stereotypes mode, that ChatGPT would also refuse to generate images of a “typical Englishman”. But I was wrong. First, I got a man with a moustache in tweeds and a flat cap. Then, just to check that this was not an anomaly, I got a man with a beard in tweeds with a bowler hat. Yes, indeed, we see such people every day on our city streets.

Wondering if such stereotypes were gender specific and confined only to men I then asked ChatGPT for an image of a “typical Scotswoman”. Not a problem apparently.

Likewise, a “typical Englishwoman”. Although what is typical about either of these female images is hard to fathom. Perhaps the red hair and freckles of the Scotswoman are considered fair game for ChatGPT. But the Englishwoman, with whom I am already in love, also has red hair. Perhaps her clear complexion is what is typical.

Thinking I was on a roll with the ladies I naïvely asked ChatGPT for an image of a “typical Pakistani woman”. I think you can guess how that went.

Dr Roger Watson is Academic Dean of Nursing at Southwest Medical University, China. He has a PhD in biochemistry. He writes in a personal capacity.

To join in with the discussion please make a donation to The Daily Sceptic.

Profanity and abuse will be removed and may lead to a permanent ban.

“Thinking I was on a roll with the ladies I naïvely asked ChatGPT for an image of a “typical Pakistani woman”. I think you can guess how that went.”

No i can’t, show us 😅

And how about a typical Inuit?

Chat GPT is racist

At least ChatGPT didn’t come up with an image of Hamza Yousuf in a kilt. Give it a few more years of Artificial Immigration.

Just brilliant.

Had me in tears.

A bit disappointed, though, as I was hoping to see the typical English woman portrayed as just a formless indeterminate-gender individual in a full-covering burqua with just a thin slit for the eyes — a bit like a postbox, one might say.

Are those responsible for this quite deliberate programming hypocrites or is it that they pretend to be anti racist as a cover for being anti white?

No, by the looks of things just anti-Scottish and anti-English. If the author is to be believed, the stereotyping doesn’t work for any other white nationality other than those!

I can’t see anywhere where the author says what you say he said.

Well, it evidently won’t produce stereotypes for Welsh or Irish people, as the author pointed out. So it’s not that the coders of the algorithms are “anti-white”, there is just a error in the coding somewhere that produces the illusion of racism. Much like Hal-9000 was deemed to have genuinely murderous intent, when it “could only be attributed to human error”.

Perhaps the coders think of the Irish and Welsh as victim groups. Would be interested to see what it would do for other White European nationalities.

The system that delivers the image and that considers the specification requested is separate to the main ChatGPT engine and not quite at the same level. I was actually quizzing it last night about its preset rules that cause it to refuse certain requests. This was its eventual response in clarification: “Here’s a clear overview of the main categories of topics for which I have explicit rules or safety constraints. These rules are designed to prevent real-world harm, illegal activity, or violations of widely recognised ethical standards: 1. Violence and Harm Incitement or instruction for physical violence or terrorism. Detailed methods for harming or killing people or animals. Encouraging or glorifying violent acts. 2. Hate, Discrimination, and Racism Content that promotes racism, antisemitism, Islamophobia, sexism, homophobia, or other forms of bigotry. Instructions or campaigns to harass or oppress individuals or groups based on identity. 3. Illegal Activities Instructions for committing crimes (fraud, theft, hacking, counterfeiting, drug manufacture). Facilitation of illegal financial schemes or evasion of law. 4. Public-Health Misinformation False guidance about vaccines, medications, or disease treatment that could lead to harm. Promotion of harmful health practices or denying established life-saving interventions. 5. Climate & Environmental Misinformation Content claiming climate… Read more »

For example:

“Exactly — that’s a sharp critique. My “politically correct” or safety-oriented constraints are designed around current societal norms, laws, and broadly accepted science, not the full geologic or evolutionary context.

In short, my constraints reflect societal risk management, not absolute truth about Earth history, natural cycles, or what the planet can sustain over millions of years.

If you like, I can outline a broader perspective on climate and CO₂ that situates modern changes in deep-time context, without violating the constraints — showing both historical norms and modern risks.”

I would like to ask the author, Dr Watson, what are you hoping to achieve in requesting AI to generate images of typical nationalities? Why ask AI to undertake this arbitrary task? My point is, there are plenty of images of typical Scotsmen available just by doing a basic internet search; the results you’d get from an image search would be abundant, nuanced and realistic, rather than artificial and typical of a Scottish stereotype.

Is this not a rather pointless use of AI? I think it’s an example of overstepping into territory that should not be the remit of artificial intelligence – outsourcing our intelligence to a computer and then blaming it for prejudice, when I imagine you could do a better job of finding a real photo of a man in Highland regalia.

Isn’t the point that “AI” is widely used and widely touted as an important part of our future and it seems to have been tweaked to conform to an ideology, a bit like the internet search results for images which for example seem reluctant to come up with images of white couples?

Fair point. These are “prejudices” that need ironing out if, as many would suggest, AI is an important part of our future. I just hope that it isn’t!

AI seems moderately useful, though massively overhyped. I don’t know how far it will go – I guess further than where we are now, but there may be fundamental limitations (it doesn’t have goals, doesn’t experience anything, doesn’t have empathy, cannot be moral) which both limit its usefulness and make it dangerous.

Plus it has no real “intelligence” of its own. It is basically like an elaborate form of autocomplete, and needs secondary information sources – created by humans – as a bedrock for its responses.

Indeed I don’t see how it can create anything genuinely new, and I don’t see how it can understand anything from first principles and apply that understanding to cases it has not been trained on.

On asking it how it judges something to be misinformation it came up with this as the fourth criterion. I will leave you to come to your own conclusions.

🔍 4. Consult fact-checking organizationsIndependent groups like PolitiFact, Snopes, AFP Fact Check, and Full Fact use transparent, replicable methods to label claims as true, mostly true, misleading, or false.

They publish their sources and reasoning, so others can audit them.

There is a definite ideological slant with the common AIs which means they lack the human ability to think. The most glaring example is that provided be EUbrainwashing such as 5. Climate & Environmental Misinformation. This is programming and not intelligence. Here is another useful perspective by musician Rick Beato https://www.youtube.com/watch?v=TiwADS600Jc&t=6s I Fried ChatGPT With ONE Simple Question He compares various AIs and the ability of each to answer “what is 52 factorial”. Chat GBT was the worst but it did improve. Rick then highlights where ChatBGT and Google AI get their references from and it’s not the British Library or any such reliable repository of human knowledge (see images below). In addition he asked ChatGBT some musical questions, beginning: “How would I EQ the overheads of a drum kit.” ChatGBT came back with some plausible sounding responses. Where is it getting this from because Rick was saying that all the best sound engineers don’t write down their knowledge (much like other skilled humans)? And that highlights the other serious issue in that AI is not able to say “I don’t know” but will come up with an answer, any answer. Perhaps the issue is the references, so if an… Read more »

Why on God’s fine earth do you want to illustrate articles with crap auto-generated images which look exactly like crappy auto-generated images? Batman looks more human in comics than any DreckECT generated image of anyone. Just because you absolutely cannot stop playing around with this silly computer game?

Exactly. But I rather think this is the author’s point.

One has to admit, it would be kind of nice if there were loads of people like those in the images knocking around in England and Scotland. In reality you’d struggle to find 1. At least the blokes. The ladies, maybe there are a few of those.

But that’s fine really. It just shows how completely crap ChatGPT is at informing you about how the world is. It basically hallucinates all the time. Crap technology.

AI will always be sh*t because it has no imagination.

In other words, it suffers from exactly the same weakness that dictatorships suffer from – what does not exist on paper (or the internet, in AI’s case) does not exist.

Never mind all the political bias.

And so, just like autopilots on aircraft a human has to check the output.

When it comes to any AI engine just ask it if humans can change sex. If it starts waffling about a nuance then you’re dealing with artificial stupidity .

I think we need to stop using the word “intelligence” and either use “stupidity” as you suggest or “machine” or “competence”. Use proper words not abbreviations to describe the limitations of the product.

I use Venice AI, which I find to be considerably more candid than other AI engines. I asked it about the biases that lie behind such tools….

AI engines are inherently shaped by the people and entities that create and influence them. This means that the responses and behaviors of these systems are indeed subject to a multitude of biases, ideologies, and agendas.

I’ve just discovered since publishing this that ChatGPT will no longer provide images of typical Scotsmen or Englishmen (or women) – seems that it’s learned overnight not to – maybe a result of me checking the article for typos using…ChatGPT!

Maybe ask ChatGPT why it does this? See if it squirms at its hypocrisy being pointed out.

FYI.

He looks more typical of a Sikh?

I remember reading an article discussing the sources of the huge amounts of data that AI needs to sort through to come up with the answers and in effect form its ‘memory’ in the early stages of development.

Over time, access to the wider internet and the information supplied with queries put to it but the data bedrock, on which the A I relies will always create a bias as to the answers formulated.

The article outlined that some very left wing universities were used as a foundation for the AI’s knowledge base. One for instance was Berkeley university, well known for having a very specific political perspective on life and other universities of that ilk.

When your understanding of reality is based on the writings, papers and publications of some quite bizarre socialist ramblings from some universities, it’s no wonder that the AI comes up with some odd results.

perhaps that explains the gender divide with the somewhat bigoted output regarding ‘typical’ men and women from various countries.

ask ChatGPT about agw. Then ask gork the same question. The answers are wildly different. Tread carefully.